AI-Augmented Innovation Foresight

Executive Summary

In the past, innovation and foresight work was often challenging because relevant information was missing. Today it is challenging because information is everywhere, while the world itself becomes more complex and less stable. Innovation decisions have to manage two tensions at once: short-term change can hit suddenly, yet long-term shifts still shape what will matter next. The task is no longer to choose between “what is urgent now” and “what could become true next,” but to hold both together in one coherent view.

Innovation foresight has always been the anchor in this kind of situation. It is how organizations keep long-term direction in view when the present is noisy, and how they avoid reacting only to the latest shock. What has changed is the pace and density of change, which makes the gap between short-term volatility and long-term choices harder to manage with manual capacity alone.

This is where AI has become so tempting. It brings new potential to handle that dilemma more effectively, not by predicting the future, but by helping teams work with complexity at scale. It can scan broader signal fields, connect developments across domains, and build early structure around what might matter next, so foresight work can explore more paths without losing orientation. But the same capability creates a new risk. AI can produce confident output without solid sources, smooth contradictions into a story that sounds coherent, and hide bias behind fluent language. In foresight work, this is dangerous, because assumptions travel. They shape search, framing, and priorities, and if they are wrong, they often fail late, when decisions are already committed and course correction is expensive.

At Z_punkt, we use AI as a complement to human thinking, not as a replacement for it. Human judgement stays in the loop from start to finish, by design. At Z_punkt, AI-augmented innovation foresight is not only about better decision support. It is about helping organisations recognise change earlier, explore new opportunity spaces, and turn uncertainty into coordinated action. We still do hands-on research and analysis, we still run team workshops and brainstorming sessions, and we still rely on experience and domain understanding that AI does not have. AI helps us widen the view, structure material, and challenge our thinking. Our work stays human-led, and AI stays a sparring partner that makes our work stronger.

Our mission is clear: we do not use AI merely to accelerate existing work. We use it to strengthen an organisation’s ability to adapt to new realities, explore new horizons, and lead decisions and teams under uncertainty. This is how AI-augmented innovation foresight creates value at Z_punkt: not as automated judgement, but as a disciplined contribution to orientation, exploration, and responsible action.

This paper shares the Z_punkt approach to AI in innovation foresight. It explains how we increase the benefits of AI while reducing its typical failure modes, and how we translate AI-supported work into decision-ready outcomes that clients can trust, challenge, and use.

1. Why AI in Innovation Foresight now

Innovation foresight exists for moments like the one many organisations face today. When markets shift quickly and long-term forces still matter, teams need a way to hold both horizons in one view, without getting pulled into short-term reaction or long-term speculation. In practice, this creates three simultaneous tasks: organisations must adapt faster to new realities, explore new horizons more deliberately, and lead teams through uncertainty with clarity and responsibility. Foresight provides that anchor because it turns change into practical, decision-oriented questions: What is changing, and why? What could plausibly change next? What would be disruptive if it became true, and what would it mean for customers, business models, and capabilities? Which signals would tell us that a shift is real rather than noise? And how should we prepare today in a way that still makes sense if the future unfolds differently than expected?

What has changed is the workload behind these questions. The signal landscape is denser than it used to be. Relevant developments sit across technology, regulation, consumer behaviour, business models, and geopolitics, and they interact in ways that are hard to track with manual capacity alone. This does not only increase effort. It also increases the risk of blind spots and default narratives, where teams repeat the same story because it is familiar, not because it is best supported by evidence.

At Z_punkt, we see AI as a way to extend our capacity in exactly those parts of the work that are heavy on scanning and structuring, while keeping interpretation and judgement human. AI can help scan broader signal fields, structure early views, compare interpretations, and revisit earlier assumptions when new information appears. Done well, this helps organizations adapt earlier to emerging realities, explore more possibilities without losing clarity, and give leaders a stronger basis for coordinated action under uncertainty.

At the same time, AI introduces risks that are specific to foresight and innovation work. It can sound certain when evidence is weak. It can smooth contradictions into a story that feels coherent. It can hide bias behind fluent language, and it can quietly turn assumptions into statements of fact. In innovation foresight, this matters because assumptions travel. They shape what we look for, how we interpret signals, and which options get explored. If the assumptions are wrong, they often fail late, when organisations have already committed time, budget, and reputation.

This is why, at Z_punkt, we do not “hand over” foresight to AI. We use AI where it is strong, and we keep the rest explicitly human. We still do primary research. We still read, interview, analyse, and synthesise ourselves. We still debate interpretations in the team, challenge each other, and use the craft of foresight. AI supports the work, but it does not own the work

2. What can go wrong when AI enters Innovation Foresight

AI can strengthen innovation foresight, but only if it is used with discipline. Without a clear way of working, AI often increases output faster than clarity, and it can reinforce weak logic instead of challenging it. In our experience, the problems are rarely technical. They are about transparency, review, and ownership.

- A first failure mode is apparent evidence. AI outputs can sound precise and complete, while the underlying sources are unclear, outdated, or missing. In foresight work this is risky, because one unverified claim can quickly become an assumption that shapes later framing and priorities. At Z_punkt, we treat source visibility as non-negotiable for anything that influences decisions.

- A second failure mode is hidden assumptions. AI is good at filling gaps and smoothing contradictions. That can make a narrative feel coherent even when key conditions are still unknown. Teams stop asking what must be true for an idea to work, and they start debating conclusions instead of testing assumptions. At Z_punkt, we surface assumptions early, separate observation from interpretation, and keep uncertainty visible where it belongs.

- A third failure mode is false certainty. AI language often sounds confident, even when the best answer is “it depends” or “we don’t know yet.” In innovation foresight, this can push teams into early closure, because the output feels like a decision. At Z_punkt, we use AI to widen the space of possibilities and to stress-test logic, not to end debates prematurely.

- A fourth failure mode is missing traceability. When teams cannot show why one option beat another, decisions become difficult to defend and discussions repeat. In foresight-led innovation this is costly, because the real work is often not one workshop, but the sequence of decisions that follow. At Z_punkt, we keep a clear thread from signals to implications to options to recommendations, so clients can challenge the logic and still work with it.

Two organisational patterns often sit underneath these failure modes.

The first is capability concentration. Sometimes a few individuals become the internal “prompt experts.” They get strong results, but the organisation cannot reproduce them reliably, and learning does not scale. At Z_punkt, we avoid this by working with shared standards, explicit review points, and team-level ways of working, so quality is not tied to one person’s style.

The second is template overfitting. Teams use the same prompt template for very different questions and get familiar-looking output. It feels structured, but it often misses what is unique in the case. At Z_punkt, we avoid “one template fits all.” We standardise quality, not thinking. We use shared standards and reusable output formats, but we design the AI use around the decision that needs support and the evidence that is available.

None of this is a reason to avoid AI. It is a reason to use it responsibly. For innovation foresight, the key is simple: AI can support scanning, structuring, comparing, and drafting, but responsibility stays with the people who will act on the work. At Z_punkt, we therefore treat guardrails, review points, and risk management as part of the method, not as add-ons

In the next chapter, we describe how we do this in practice, and how our approach increases the benefits of AI while reducing its typical failure modes.

3. Our AI principles at Z_punkt

At Z_punkt, we don’t use AI to do the same work faster. We use it to do better work differently. That means we do not chase “impressive output.” We build a way of working that makes AI support reliable, useful, and safe in real projects.

We start from the decision a client needs to make and the value the work must create. Then we decide where AI helps, and where it should not be used. We use AI to widen search, structure material, compare interpretations, and challenge our thinking. We do not use AI to outsource judgement. At Z_punkt, the interpretation work stays human-led, and it stays visible. We still do hands-on research and analysis, and we still do the team discussions where the real thinking happens.

AI can also create failure modes that are especially risky in foresight: confident output without strong sources, clean stories that hide contradictions, and assumptions that quietly turn into “facts.” That is why our approach is deliberately systemic. We combine clear guardrails with practical performance unlockers, so benefits rise while typical risks are reduced.

3.1 AI Guardrails: what we do not compromise on

Our guardrails are not a compliance appendix. They are the conditions that make AI use reliable in real project work.

We keep a shared AI mindset across our team. Everyone needs the same basic understanding: AI can support scanning, structuring, drafting, and comparison, but it cannot guarantee truth, relevance, or responsibility. That sounds obvious, yet many problems start when teams forget it and treat fluent output as if it were evidence.

We also treat responsibility, compliance, and risk management as part of the method from day one. We are explicit about data boundaries and confidentiality, and we are disciplined about what can be used where. Just as important, we avoid “black box claims” in decision-relevant work. If a claim matters for a recommendation, our experts at Z_punkt make sure it is traceable, clearly labelled, and open to challenge.

Finally, we make human responsibility explicit. AI supports. Our experts at Z_punkt decide what is relevant, what counts as credible evidence, where uncertainty must remain visible, and what conclusions are responsible to draw. Accountability does not move to the tool.

3.2 AI Performance unlockers: what makes AI use actually valuable

Guardrails keep the work safe and credible. The unlockers make it useful and high-performing in practice. We design AI-supported foresight so that teams can think better together - with shared standards, transparent logic, and review moments that build trust, not black-box dependence.

First, we use AI as a sparring partner, not as an answer machine. We deliberately ask for counter-arguments, alternative frames, and weak spots in the logic. The goal is not agreement with the first narrative. The goal is better thinking and earlier clarity about what might break.

Second, we keep humans in the loop through explicit review points and shared sense-making. AI can draft and structure quickly, but our experts at Z_punkt review and challenge the output at the moments that matter: when sources are selected, when assumptions are formed, when options are compared, and when recommendations are written. These reviews are not a polite final step. They are built into the workflow so teams can align around explicit logic, shared responsibility, and clearer leadership under uncertainty.

Third, we standardise quality, not thinking. We use shared standards and reusable output formats so work is comparable across projects, but we avoid “one prompt fits all”. We do not force every question through the same template. We design the AI use around the decision that needs support and the evidence that is available, while keeping outputs consistent in how they show sources, assumptions, trade-offs, and uncertainties.

Fourth, we go beyond “just an LLM” when it is useful. Innovation foresight work often needs structured sources, clear evidence trails, and systematic comparison. Where it makes sense, we combine models with tools, databases, and structured methods, so the work remains grounded and reviewable rather than purely text-based.

Fifth, we treat AI capability as continuous learning. Tools evolve quickly, and so do the right practices. We improve workflows over time, update standards as the landscape changes, and share what works internally so quality does not depend on individual habits. This is how AI use becomes a stable organizational capability, not a sequence of one-off experiments.

Taken together, these guardrails and unlockers allow a very practical outcome: we can widen the search space, make assumptions explicit, and compare options earlier, while keeping judgement, responsibility, and quality firmly with our experts at Z_punkt and with the client teams who will act on the work.

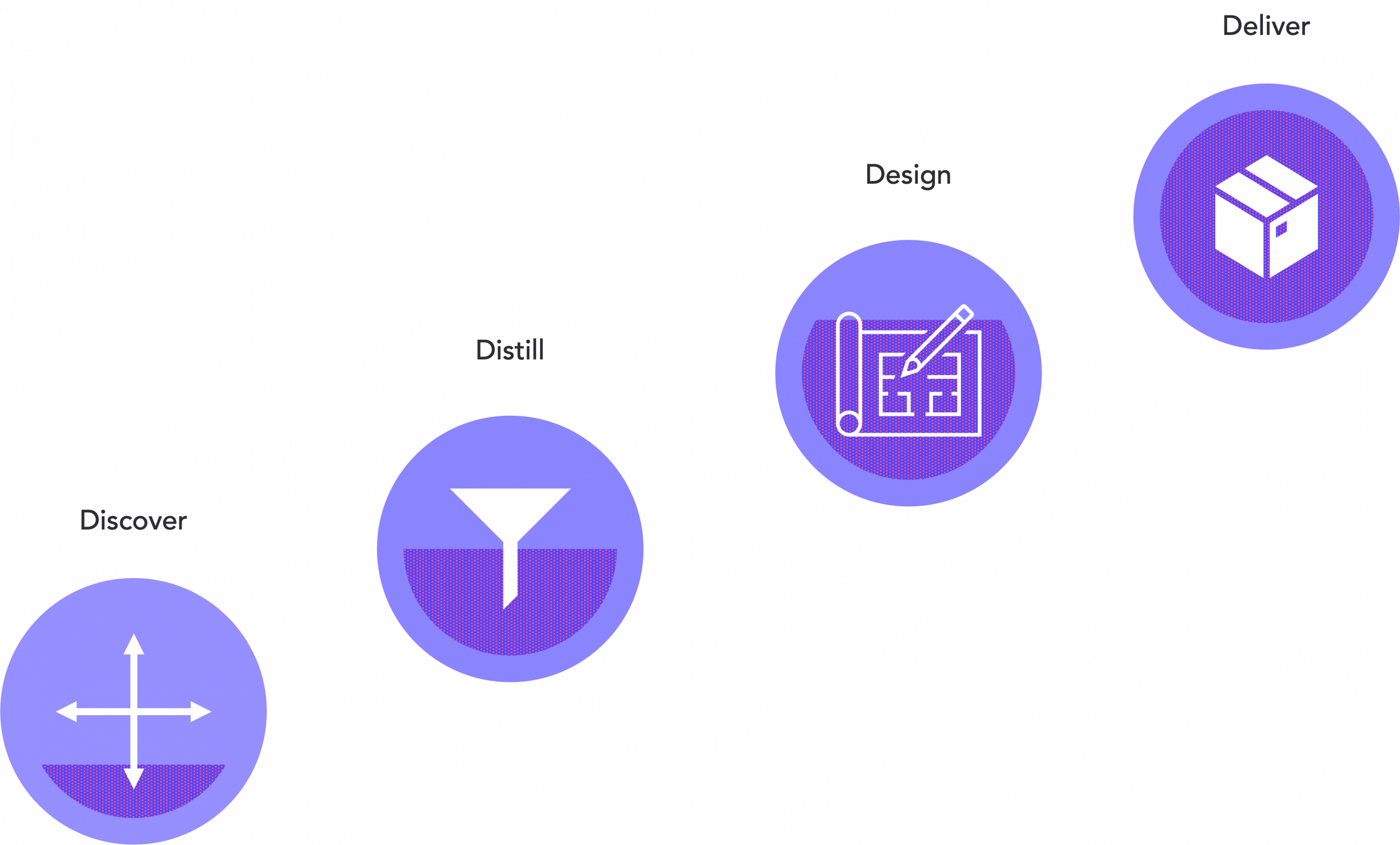

4. Our workflow model: 4D Applications, with Decide staying human

Our principles only matter if they translate into daily project work. At Z_punkt, we therefore use a simple workflow model that makes AI support practical and keeps responsibility clear.

We structure our work into four types of activities that show up in most innovation foresight projects: Each of the four activities supports a different part of future readiness. Discover and Distill help organizations adapt to new realities. Design helps them explore new horizons with discipline. Deliver helps leaders and teams translate uncertainty into coordinated action. And we make one point explicit: Decide stays human. AI supports the work, but our experts at Z_punkt and the client teams remain accountable for the choices that follow.

4.1 Discover: widen the view, without losing discipline

In Discover, we build a grounded picture of what could matter. We scan signals across technology, markets, competitors, regulation, and changing needs, and we structure them into themes and early hypotheses. AI helps us work wider and faster through large volumes of material, but it does not define what matters. Our experts at Z_punkt set the search space, decide which sources count, and make sure the difference between observation and interpretation stays visible. The result is not a trend list. It is a structured opportunity space that is clear enough to guide the next step.

4.2 Distill: turn signals into meaning and testable assumptions

Distill is where foresight becomes decision support. We connect signals to plausible drivers and implications, and we make assumptions explicit so they can be tested instead of being smuggled into narratives. AI helps propose patterns, alternative interpretations, and “what would need to be true” logic. Our experts at Z_punkt review and sharpen that logic, identify where evidence is strong or weak, and define what information would actually change the view. The result is a clear frame that helps teams disagree productively and move forward with a shared logic, even when uncertainty remains.

4.3 Design: create options, then stress-test them early

In Design, we turn frames into real options and compare them under realistic constraints. This is where we deliberately avoid “nice ideas” that collapse later. AI helps generate distinct option routes and supports structured checks on desirability, feasibility, viability, and risk. Our experts at Z_punkt define the evaluation criteria, set hard constraints, and make trade-offs explicit. We also translate options into early requirements and capability implications, so the organisation sees what it would need to build, buy, or change. The result is not a single winner. It is a small set of options that can be discussed honestly, with clear strengths, weaknesses, and learning needs.

4.4 Deliver: make the work decision-ready and usable over time

Deliver is where innovation foresight becomes something leaders and teams can act on together. We translate the work into clear decision documents, leadership communication, and roadmaps that travel well across stakeholders. AI supports drafting and structuring, but our experts at Z_punkt make sure claims are traceable, assumptions are visible, and risks are stated plainly. A key element here is decision resilience and team alignment. We include learning gates, simple trigger points, and clear moments for reassessment, so recommendations can be revisited when conditions shift. The result is work that supports a decision now, enables coordinated action across teams, and remains usable when the context changes.

Decide stays human

Across all four activities, AI helps our team work faster through scanning, structuring, and comparison tasks. But decisions do not come from the tool. They come from people who own the responsibility. Our experts at Z_punkt make judgement calls throughout the work, and the final decisions remain with the client teams and leaders who will act on them. That is not a limitation. It is the condition for responsible leadership and innovation under uncertainty.

5. Where we apply AI in innovation foresight: five core use areas

The 4D workflow describes how we work. The next question clients usually ask is where AI actually helps in practice. Over time, our work has clustered into five recurring use areas. They cover the decisions that matter most in innovation foresight, and they show where AI support creates real value when it is embedded in a disciplined method. Taken together, these five use areas support three broader ambitions: adapting earlier to new realities, exploring new horizons more deliberately, and enabling leaders and teams to act under uncertainty.

5.1 Definition and evaluation of growth fields

Many projects start with a strategic need: identify where future growth could come from, and decide which opportunity spaces deserve serious attention. The difficulty is that growth fields rarely appear as clean categories. They emerge at intersections, where technology change meets shifting needs, regulatory moves, infrastructure constraints, and new value creation logic.

We use AI to widen and structure the search space early, so we can scan across domains without losing transparency. Our experts at Z_punkt then do the essential work: we turn themes into hypothesis spaces, make assumptions explicit, and connect the opportunity logic to the client’s strategic fit and capability realities. The outcome is a grounded shortlist with clear reasoning, not a generic trend list, and it is shaped to support a real decision about where to explore next.

5.2 Product and service innovation

When teams move from opportunity spaces to product and service options, speed can be misleading. AI can generate concepts quickly, but without discipline it produces “nice ideas” that collapse later, because feasibility, viability, and risk were treated as afterthoughts.

In our work, AI supports option generation and structured comparison, but we pair this immediately with early checks. We connect options to expected needs and contexts, we cross-check with internal interview insights and market evidence, and we make the key assumptions visible rather than implicit. We then pressure-test feasibility through capability requirements, data needs, integration complexity, and technical dependencies, and we add early viability logic through business model options, cost drivers, and sensitivities. Our experts at Z_punkt define the criteria, set constraints, and make trade-offs explicit. For clients, the benefit is not “more ideas.” It is earlier clarity about which options are worth prototyping, and which should be stopped early.

5.3 Technology and research prioritization

Innovation foresight often feeds into R&D choices. Many companies have strong technical teams but struggle to keep priorities aligned as the external context shifts. Portfolios become difficult to steer because information is scattered, new signals appear continuously, and decisions are revisited without a clear logic trail.

We use AI to structure portfolio material into a comparable baseline view and to connect internal efforts to external drivers such as technology momentum, regulatory trajectories, competitive moves, and changing customer needs. Our experts at Z_punkt then translate this into prioritization views that innovation teams, portfolio owners, and steering groups can use, including capability gaps, dependencies, and explicit assumptions. The outcome is a prioritization logic that can be updated as signals change, rather than a one-time snapshot that ages quickly.

5.4 Business model innovation

In many innovation initiatives, the technology is not the main problem. The business model logic is. Teams can describe an attractive solution but cannot explain how value is created, captured, and protected under realistic market conditions and plausible future shifts.

We use AI to explore and compare business model variants and to make value logic explicit, including the drivers that would make a model work or fail. We also stress-test sensitivities around cost curves, pricing, adoption barriers, and regulatory constraints, because these factors often decide whether an option remains viable across different futures. Our experts at Z_punkt keep this grounded, label assumptions clearly, and ensure the output is something innovation teams and decision makers can challenge and still use.

5.5 Ecosystem development

More and more innovation outcomes depend on partners and ecosystems. Teams need to understand who shapes the space, where new clusters of activity emerge, and which partnerships could become decisive. This is difficult to do manually because ecosystems span science, start-ups, corporate moves, standards bodies, and regulation.

We use AI to map ecosystems faster and more broadly, and to detect emerging clusters across publications, patents, start-ups, and investment signals. Our experts at Z_punkt then add the strategic reading: where control points might sit, what roles are realistic for the client, and which partner choices make sense under different future conditions. The result is not a list of logos. It is a clearer basis for ecosystem moves that fit strategic intent and capability reality.

Across all five use areas, one cross-cutting outcome matters: leadership under uncertainty. We use AI-supported foresight to make assumptions discussable, align teams around explicit choices, define trigger points for reassessment, and create a shared logic for action. This turns foresight from expert input into a capability that helps organizations move together when the future remains open.

6. What this enables for leaders, decision makers and innovation teams

Used well, AI changes the rhythm of innovation foresight work. Instead of spending most of the time collecting, cleaning, and rephrasing information, more time can be spend on the work that actually moves relevant decisions: framing the question, challenging assumptions, comparing options, and deciding what to test next.

In practice, three shifts tend to matter most. The first is coverage with focus. We can scan wider and still keep a clear view of what is relevant, because signals are structured early and evidence trails remain visible. The second is sharper reasoning. Assumptions stop hiding inside narratives and become explicit, so disagreements turn into better questions rather than longer debates. The third is earlier reality checks. Options are stress-tested sooner, so weak routes fail fast, and promising routes become clearer before time and budget are committed.

The result is not a bigger folder of AI output. It is fewer loops that go nowhere. Fewer “one more round” meetings. More decisions that are easier to explain, easier to defend, and easier to revisit when the context changes, because the logic and the dependencies are visible.

This is also what makes AI useful beyond one project. When sources, assumptions, and review points are handled consistently, the work becomes repeatable across topics and teams. Innovation foresight stops depending on individual prompt skills and starts behaving like a capability that can be trusted under pressure.

Closing

Innovation Foresight is a practical discipline. It helps to see change earlier, think through implications, and prepare before decisions become urgent or are too late. AI can be a strong partner in that work, but only if it is handled with discipline and craft.

What we have shown in this paper is simple. AI helps when it expands what we can explore and helps us structure complex signals earlier, without turning the work into noise. It helps when it sharpens how options are compared and stress-tested. And it helps when quality and responsibility are kept explicit, so the work remains trustworthy when it travels into real decisions.

Done right, AI does not change what foresight is. It strengthens an organization’s ability to adapt to new realities, explore new horizons, and lead with responsibility when the future remains uncertain. In that sense, AI-augmented foresight does not only improve analysis. It helps organizations move the future forward.